Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

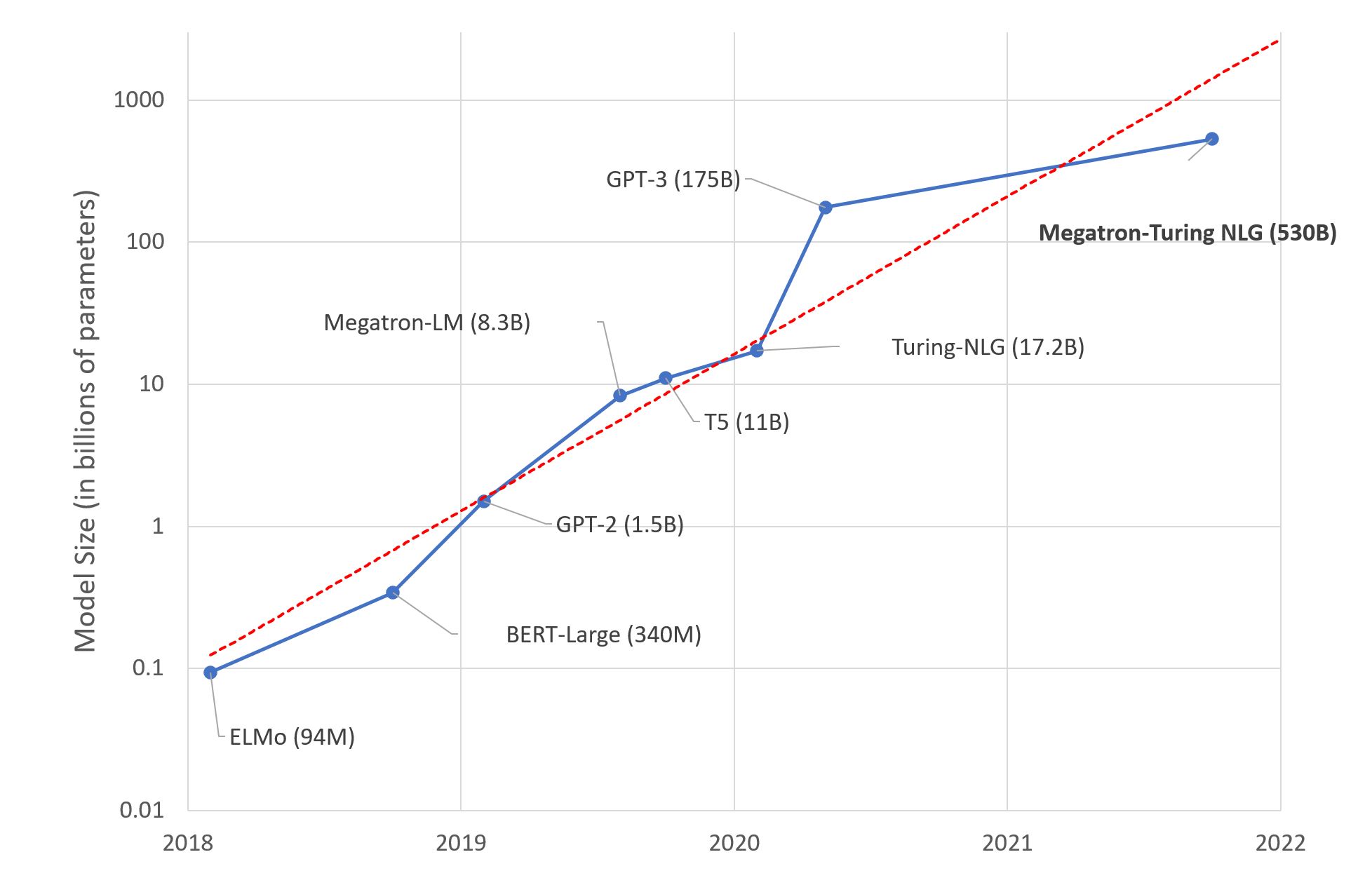

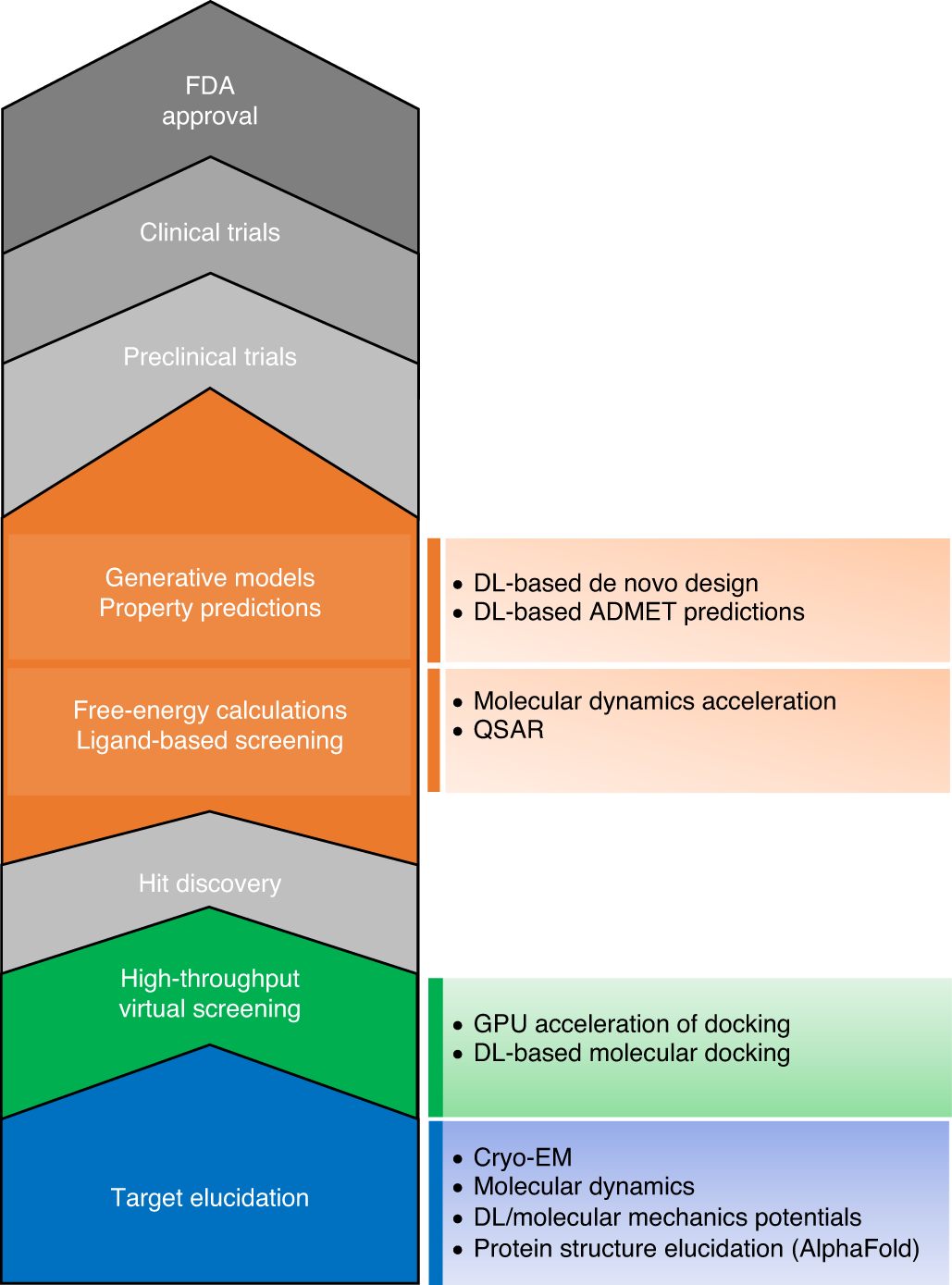

The transformational role of GPU computing and deep learning in drug discovery | Nature Machine Intelligence

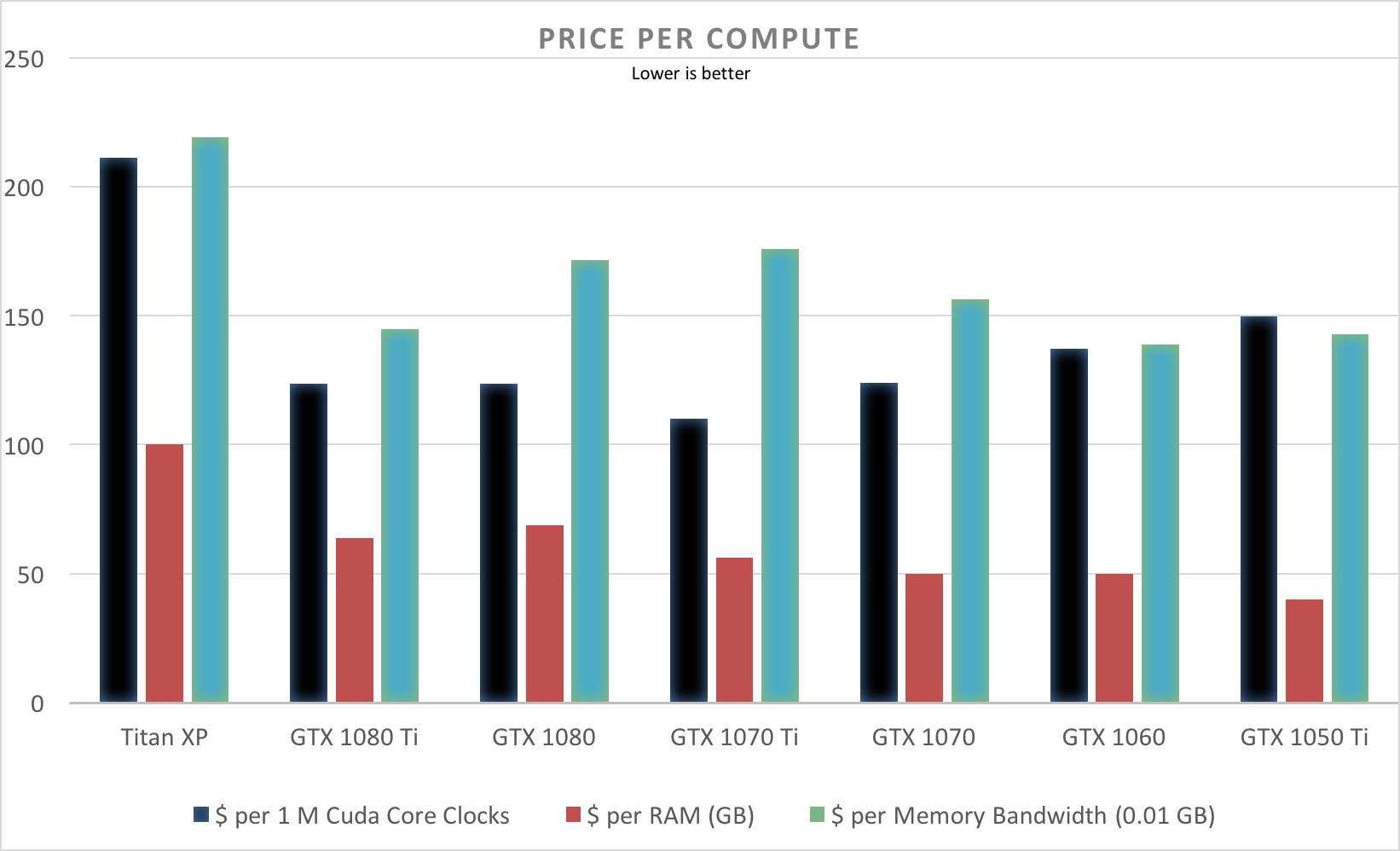

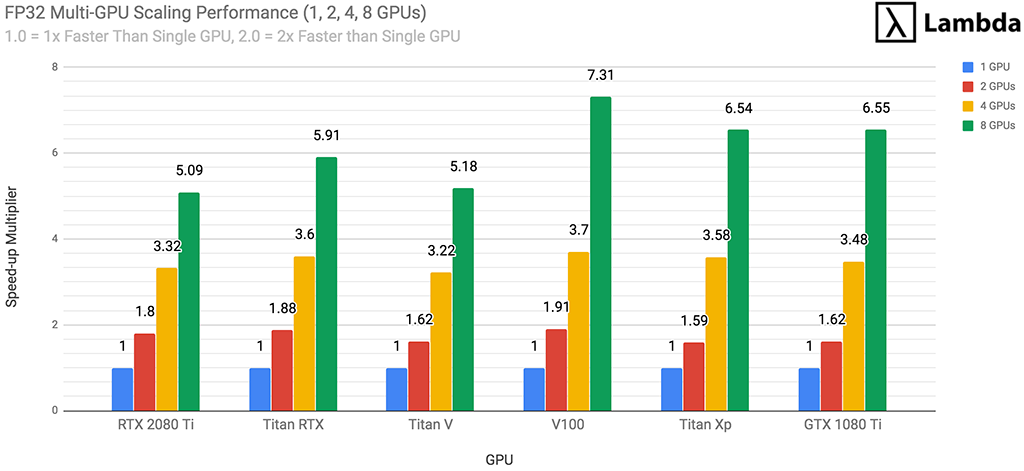

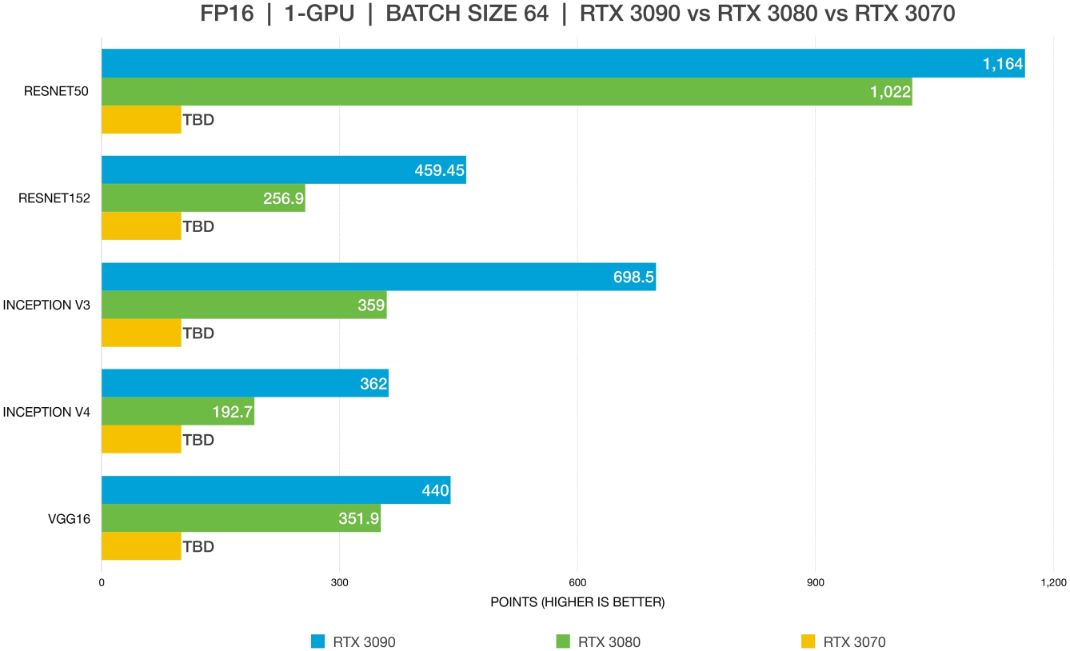

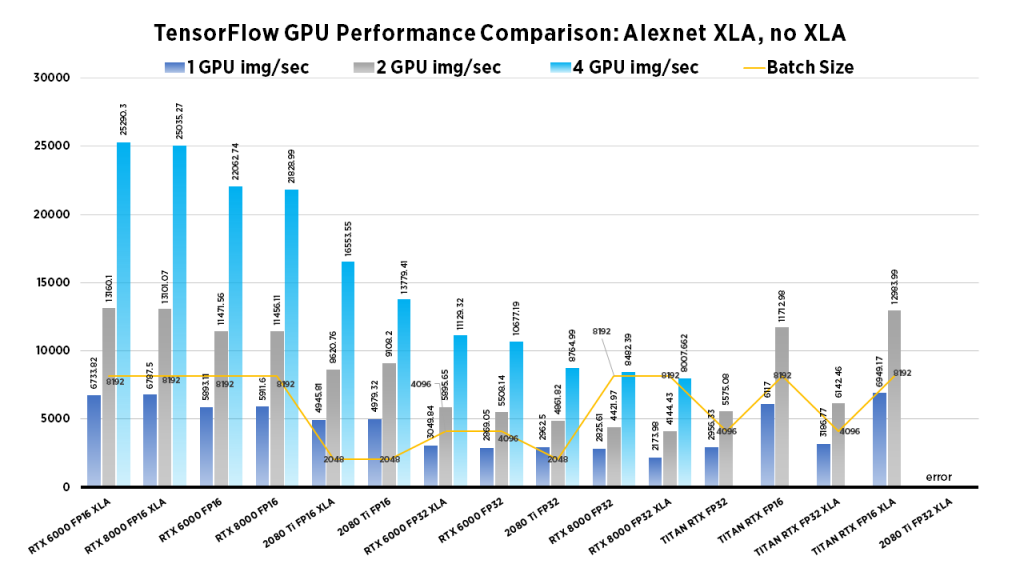

Deep Learning Benchmarks Comparison 2019: RTX 2080 Ti vs. TITAN RTX vs. RTX 6000 vs. RTX 8000 Selecting the Right GPU for your Needs | Exxact Blog

Deep Learning Benchmarks Comparison 2019: RTX 2080 Ti vs. TITAN RTX vs. RTX 6000 vs. RTX 8000 Selecting the Right GPU for your Needs | Exxact Blog

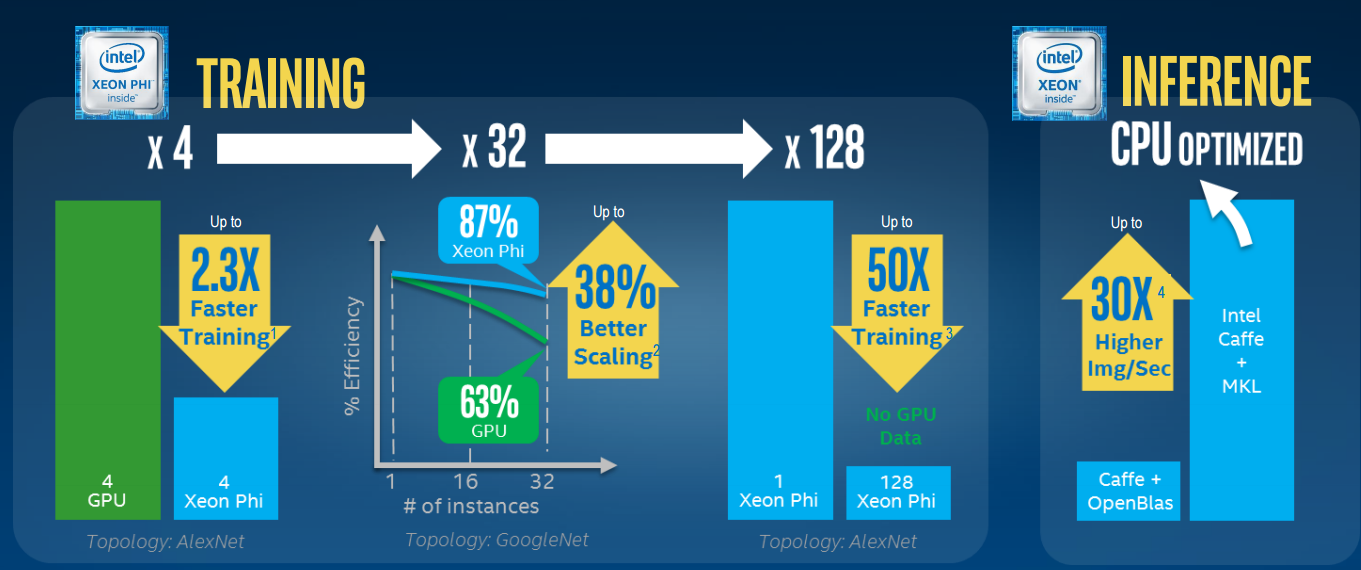

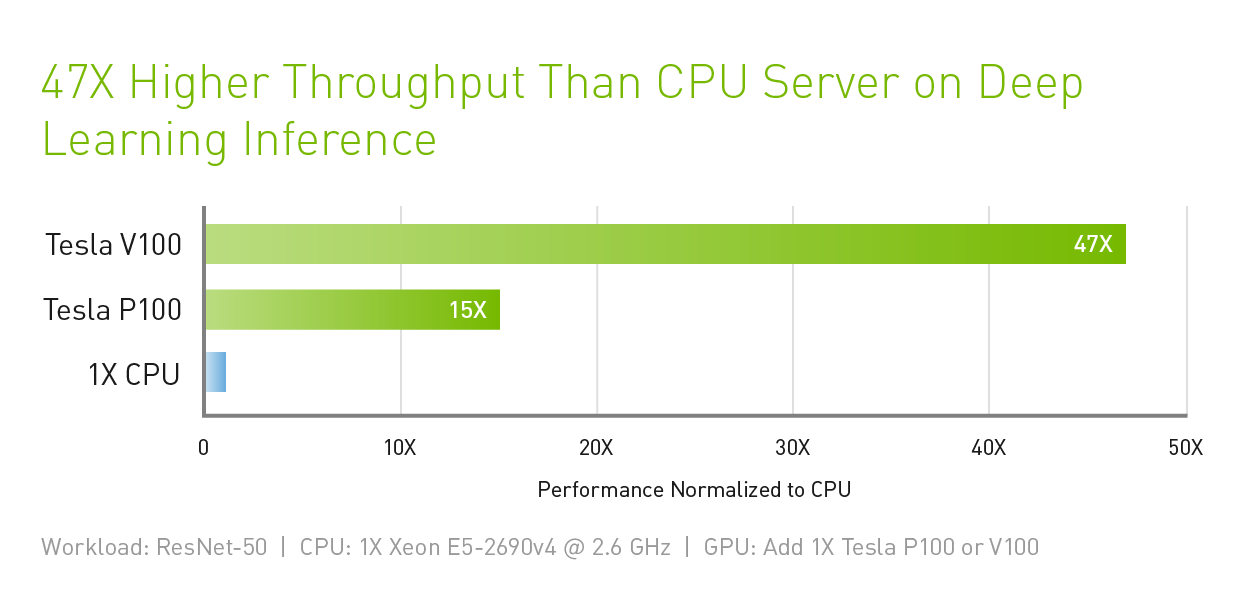

Is Your Data Center Ready for Machine Learning Hardware? | Data Center Knowledge | News and analysis for the data center industry

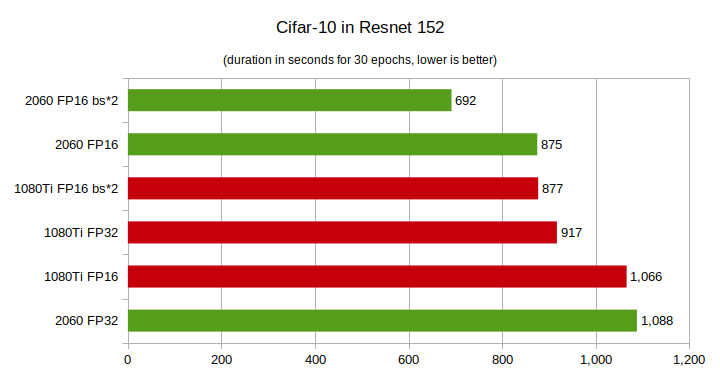

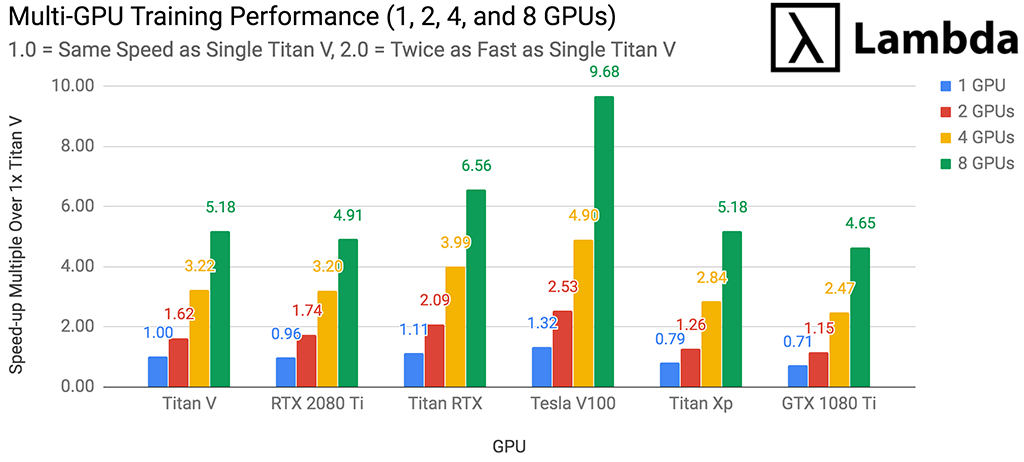

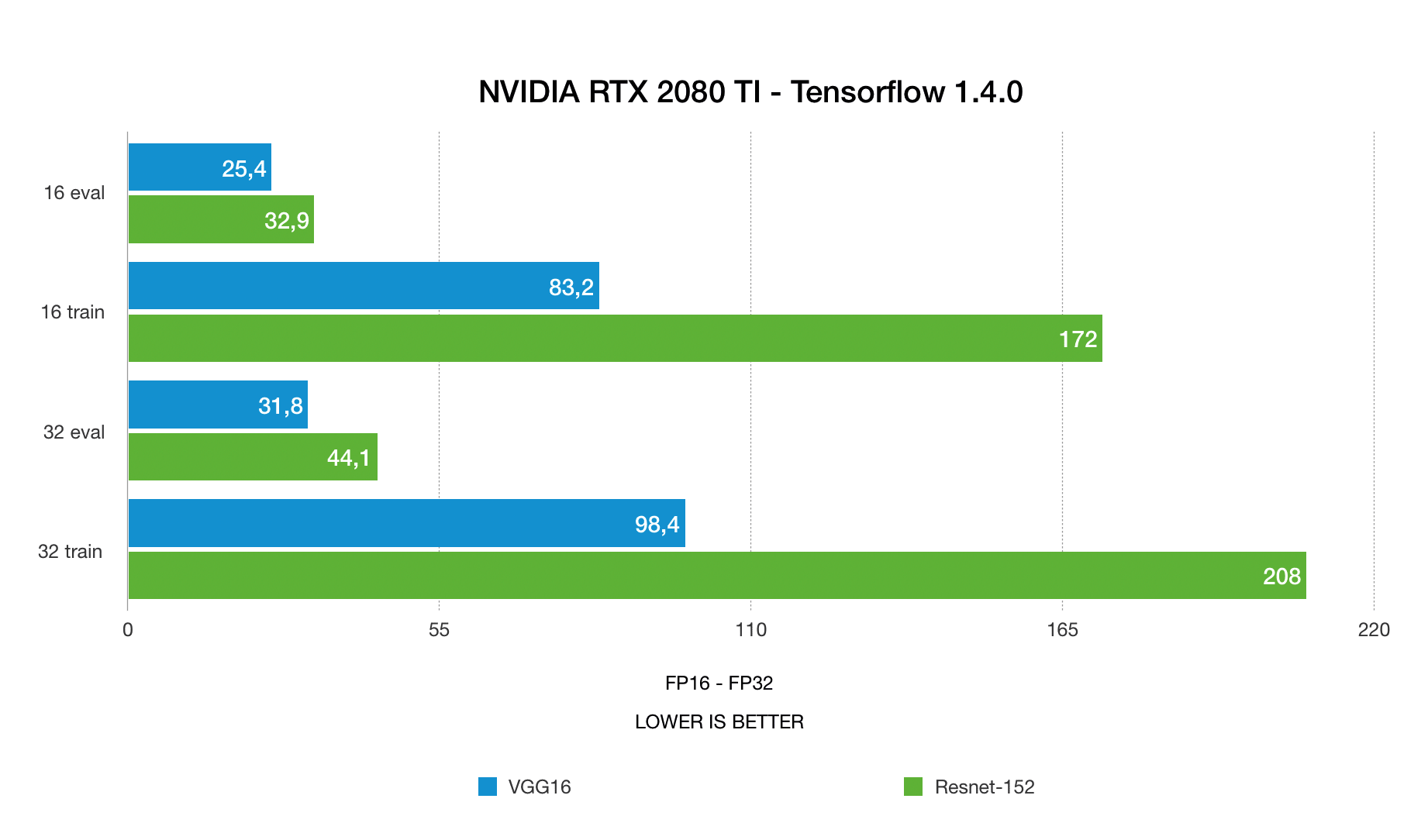

1080 Ti vs RTX 2080 Ti vs Titan RTX Deep Learning Benchmarks with TensorFlow - 2018 2019 2020 | BIZON Custom Workstation Computers, Servers. Best Workstation PCs and GPU servers for AI/ML,